Monitoring your Kubernetes environment is important — especially if you’re running production workloads. Let’s say you’ve already done the work of collecting the Kubernetes audit logs… what’s next? What should you actually be looking for?

We’ve been working on Kubernetes security monitoring for a while and have some insights to share. Whether you run Kubernetes yourself or use a managed provider like GKE, EKS, or AKS, certain events are worth investigating. They might indicate a mistake or, worst-case scenario, you might have an attacker poking around inside your Kubernetes cluster.

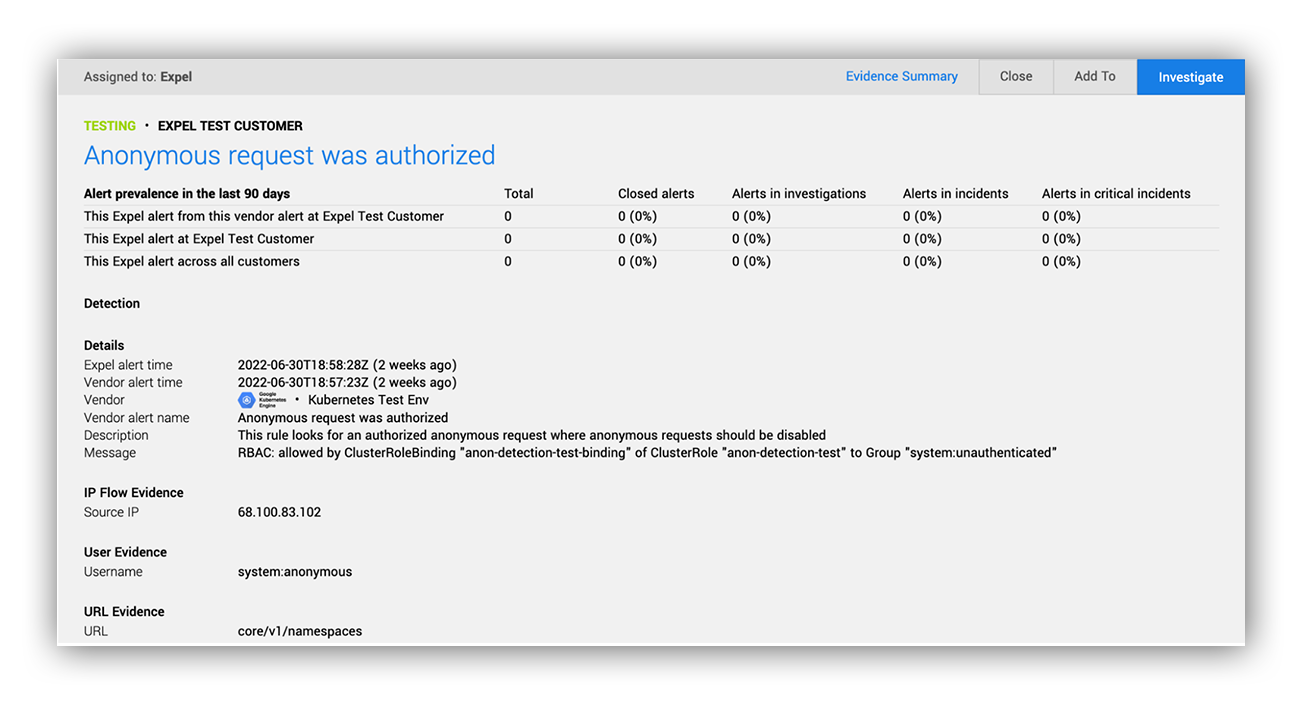

Successful authorization of an anonymous request

Okay, so nobody has Kubernetes clusters with public endpoints anymore, right? … Right? (Cue awkward silence…) As it turns out, this is still really common. A recent internet scan by Shadowserver found nearly 400,000 publicly accessible Kubernetes API endpoints. We’re not here to name-and-shame, but there are some real reasons you may want a public API endpoint. It’s pretty convenient, for example. But that convenience comes with associated risk. You’ll want to make sure that anonymous access is disabled (or well controlled) to avoid leaking sensitive information about your workloads (or worse, secrets that lead to a larger compromise).

Luckily, this is something we can easily detect using the Kubernetes audit log. When API requests are logged, anonymous users are categorized under the “system:anonymous” group, letting you easily look for any requests that were allowed for that group. Watch for requests for unexpected resource kinds.

Quick tip: Some managed providers have default built-in roles that grant anonymous users some very limited permissions (for cluster discovery). Examples include GKE’s system:discovery and system:public-info-viewer roles. Anonymous requests for these default roles might be okay, depending on your risk model.

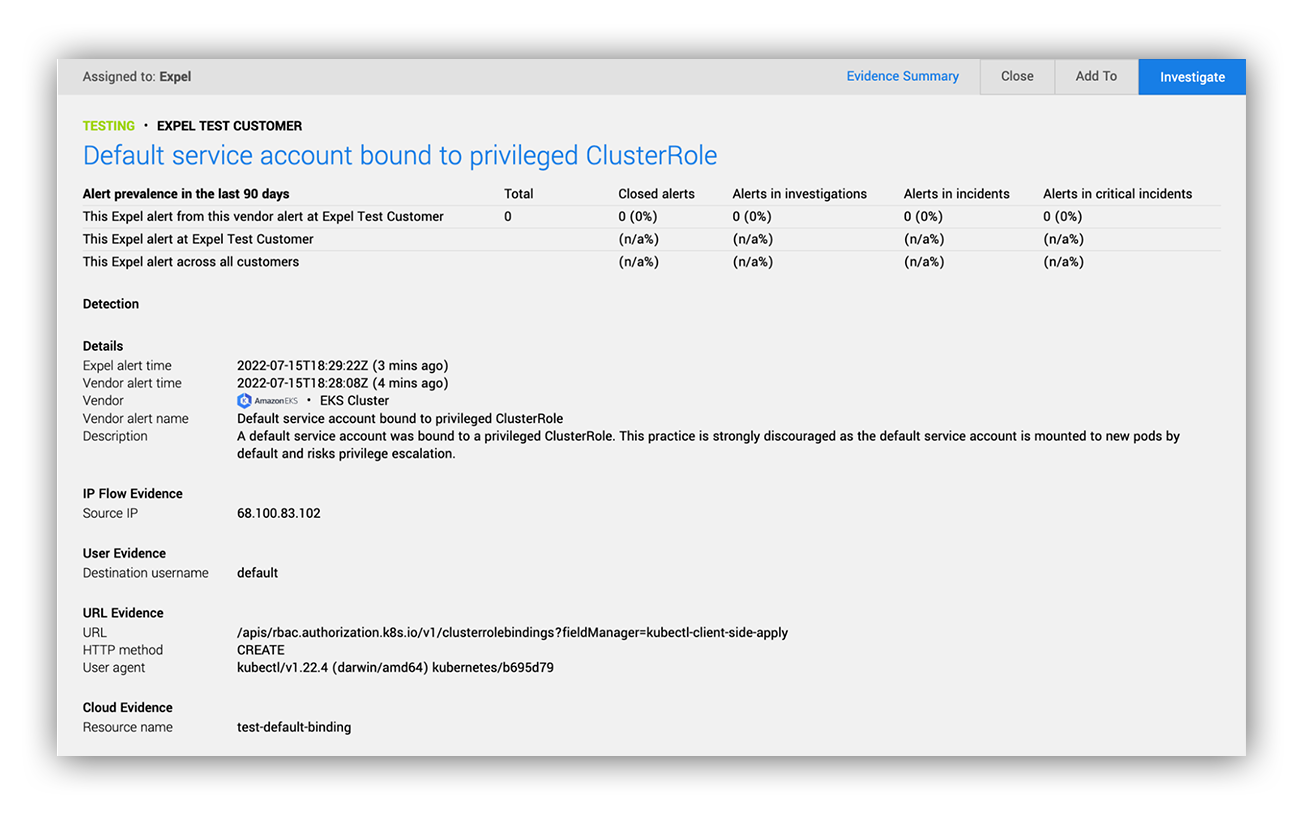

Default service account bound to privileged cluster role

Default service accounts are one of the most common ways to escalate privileges in Kubernetes. Unless you explicitly change this behavior, Kubernetes will create and auto-mount default service account credentials into pods as they are created. This isn’t a huge issue if you aren’t using that default service account for anything, as they don’t have any permissions by default. However, if you granted default service account permissions with a role binding, an attacker could use those permissions against you.

For this reason, it’s a good idea to look out for the creation of a cluster role binding that maps a default service account to a privileged cluster role. This practice has the unintentional effect of granting cluster-wide permissions to all pods created in the service account’s namespace (except for pods that opt out of the credentials or choose a different service account). In any case, it’s a dangerous practice that usually leads to unnecessary exposure of credentials with API permissions.

This is also easy to detect in the Kubernetes audit log. Simply look for the creation or modification of a role binding where the subjects include a default service account and the referenced role is privileged (like view, edit, admin, or cluster-admin).

Quick tip: Service account subject names start with “system:serviceaccount:” and end with “:default.”

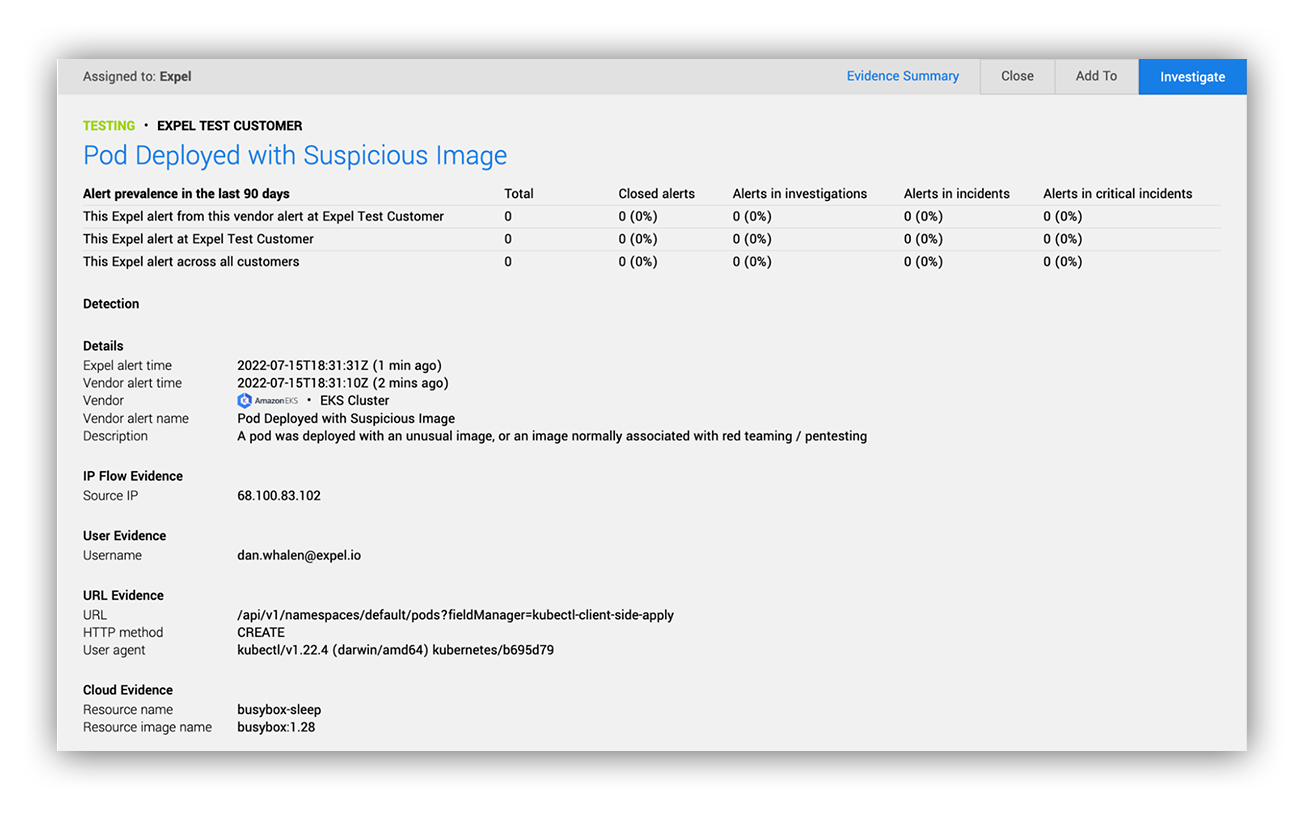

Pod created with an unusual image

It’s a good idea to get a handle on the images running in your cluster. From what we’ve seen of the Kubernetes threat landscape so far, coin mining tends to be a common goal for opportunistic attackers. It isn’t sophisticated, and it’s not a good look if you’re affected. We recommend a deployment model where there’s only one way to deploy images (usually a CI/CD service) rather than allowing users to create pods manually. If you implement this approach, and expect images to only come from your private image repository, it’s a great opportunity to discover pods that don’t follow those rules. Even if you don’t have your deployment process locked down to that degree, there are some images you probably never expect to see in your clusters and are worth examining.

Quick tip: Pod images are logged in the format <repository hostname>/<image name>:<tag>. This makes it easy to look out for unexpected repositories or image names.

Taking it to the next level

The Kubernetes audit log is a great source of high-fidelity security signals. We’ve walked through three ideas to get you started, but there’s a whole world of opportunity to build out security alerting that helps you identify and quickly respond to issues before they become full-on crises.

Expel aims to make Kubernetes security accessible to everyone. If you’d like to learn more about how we can help, contact us.