Artificial intelligence (AI)—or, more accurately, machine learning (ML), although “AI” has captured the popular fancy—is a huge boost for AI threat detection thanks to its speed, proactivity, automation, efficiency, and ability to promote collaboration.

How many cyberattacks are there per year?

Let’s think about the attack vectors alone. One source estimates that phishing emails alone account for 3.4 billion attacks—per day. There are more than 15,000 business email compromises and 3,640 successful ransomware attacks annually. Then there’s malware, password attacks, man-in-the-middle attacks, SQL injection attacks, DDoS attacks, cryptojacking, zero-day exploits, spoofing, identity-based attacks, code injection attacks, supply chain attacks, DNS tunneling, DNS spoofing, IoT-based attacks, corporate account takeover, whale-phishing, spear-phishing, brute force attacks, cross-site scripting attacks, fileless malware, advanced persistent threat, and a few more.

So…a lot.

As you might imagine, dealing with that sort of volume using traditional detection and response methods is essentially impossible, so many security teams (and the tools they use) struggle to keep up.

Enter ML. Let’s have a look at how these systems can augment human capabilities by using AI threat detection and powering proactive defenses.

Machine learning enhances threat detection

Conventional (i.e. old-school) approaches to threat detection (and even hunting) involve manual analysis of alerts, logs, and indicators of compromise (IOCs), which is error-prone and intensely time-consuming. Even with the addition of automation for security alerts, there’s a huge need for triage. The process retroactively tries to help identify suspicious/malicious activity in your environment and often yields high-false positive rates.

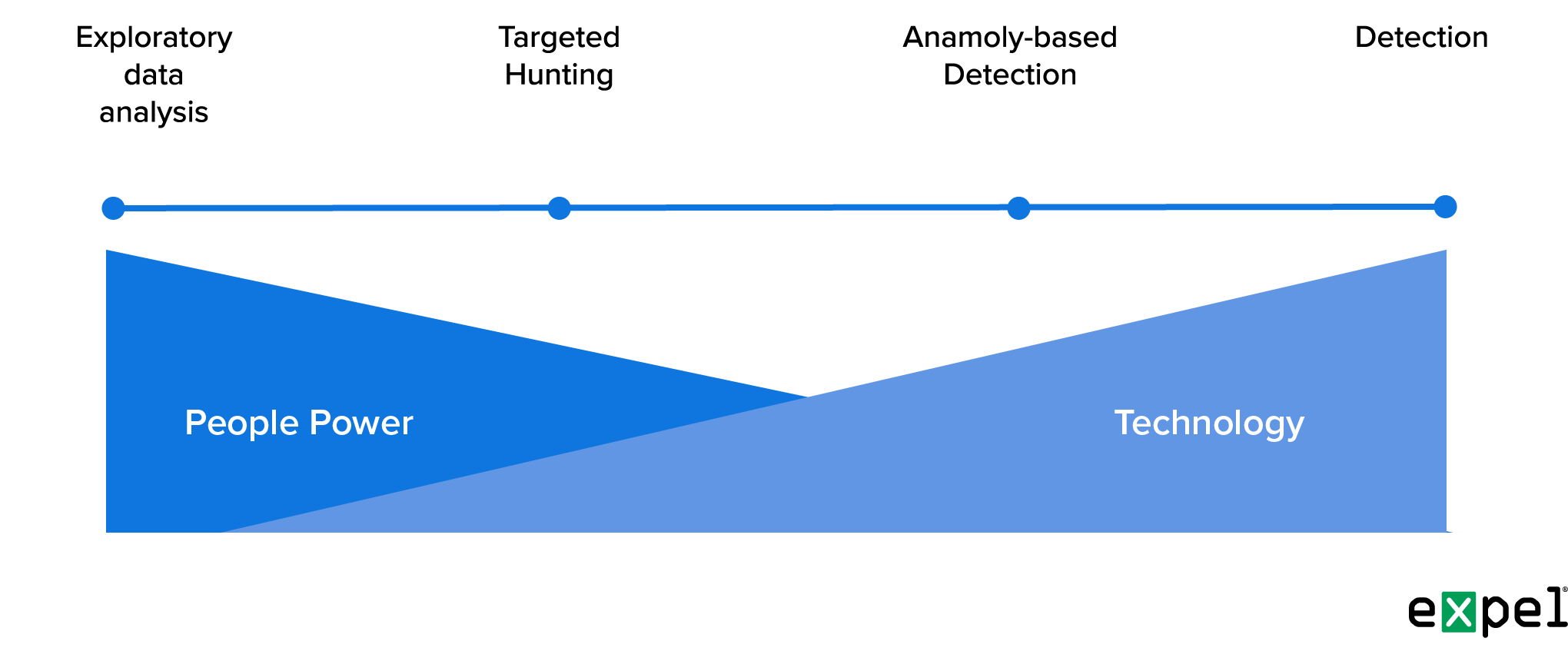

This diagram illustrates the approach we employ at Expel; it also highlights where the use of tools, such as ML to automate hypothesis-based hunting, intersect with the threat hunting process for AI threat detection.

One of ML’s primary advantages is its ability to analyze vast amounts of data at blinding speed, identifying patterns and anomalies that may indicate the presence of a threat as it goes.

Machine learning algorithms also teach systems using historical data. By continuously analyzing network traffic, system logs, and user behavior, ML-powered hunting solutions can identify potential threats that might have gone unnoticed using manual methods—or even ones found by alerts within your security tools.

Proactive defense

ML can also detect previously unknown threats by identifying behavioral patterns that deviate from normal activities by tokenizing/hashing the streams of logs in a comparable way (note: this requires a large sample size with multiple months worth of raw logs). Organizations can then respond quickly to emerging threats, minimizing the potential damage and reducing the dwell time of attackers within the network.

We have a huge advantage: thanks to our work with a deep, diverse customer base, we can use the data in our own extensive ecosystem to help identify these patterns in building the logic for our hunts (something we can accomplish at scale).

Automation and efficiency

By automating the collection, correlation, and analysis of security data, ML-powered solutions streamline the threat hunting process to create high fidelity initial leads for investigation, significantly reducing response times.

One main way this is accomplished: the threat hunting group uses the (often extensive) MDR data in their own ecosystems to help identify behavioral patterns that deviate from normal activity. They then use these insights to build the logic for hunts—creating a scalable process.

ML also assists in incident response by human analysts. By rapidly analyzing data, providing contextual information, and generating useful insights and recommendations, the algorithms help the security operations center (SOC) make informed decisions and respond effectively to security incidents or augment investigations by running hunts to help expand an incident or validate the organization’s level of exposure. It also frees up human analysts to focus on more complex and strategic activities.

(One more benefit: automation of repetitive and time-consuming tasks mitigates many of the burnout issues running rampant in the security industry.)

Threat intelligence and collaboration

ML plays a vital role in threat intel gathering and sharing by allowing organizations to process and analyze large volumes of data from external sources, including open-source feeds and dark web monitoring, as well as internal repositories. This keeps security teams updated with the current threat landscape, helps identify potential risks, and allows threat hunters to use the threat intelligence to determine the right hypotheses to focus on any upcoming hunting activities.

Additionally, ML-powered threat hunting platforms promote collaboration and improved feedback loops between security teams via sharing of threat intel, insights, and investigation findings. Cooperation and feedback improves threat hunting effectiveness by pooling the collective knowledge and expertise of multiple organizations.

Finally, we’ve confirmed the additional benefits to threat intelligence through ML. Tying ML to threat intel lets us de-dupe IOCs, using the ML models to determine scoring based on relevant features. This allows us to phase out less relevant IOCs from all internal (us and our clients) and external (information sharing and analysis centers, open-source repositories, vendors, etc.) sources.

Limitations and challenges

Nothing is perfect, of course. ML algorithms depend on the quality and diversity of data they’re trained on, and if the training data is biased, corrupted, or incomplete, false positives or false negatives can undermine the system’s effectiveness (garbage in, garbage out). High-quality, comprehensive training data and regular updates are critical for the success of any ML platform.

It also depends on the model used, as well as the care and feeding of that model with the training data. Managing ML demands commitment, especially when the goal is finding suspicious activity.

Attackers have machine learning, too, and are putting it to use developing more sophisticated tactics. Defender ML systems, therefore, must stay ahead of emerging threats by investing in ongoing research and development to improve algorithms and boost resilience against evolving cyber threats.

ML is rewriting the rules of threat hunting. Security orgs can significantly strengthen their AI threat detection capabilities, and do so more proactively. While the tech isn’t a silver bullet, it has the potential to revolutionize the way we protect our digital assets when combined with top-shelf human expertise.

If you’d like to hear more, drop us a line.