TL;DR

- Common cloud misconfigurations and long-lived credentials were the root cause of all cloud incidents we identified in Q1.

- This post details a recent Google Cloud attack on one of our customers.

- Key takeaways: follow least privilege principles; regenerate keys periodically; and avoid putting credentials in code, the source tree, or repositories.

One of the most common ways we see attackers gain unauthorized access to a customer’s cloud environment is through publicly exposed credentials. In fact, common cloud misconfigurations and long-lived credentials were the root cause of 100% of incidents we identified in the cloud in the first quarter of 2022 (more on this in our new quarterly threat report).

Which makes it no surprise that this is exactly how an attacker gained access to a customer’s Google Cloud environment in our most recent cloud incident spotted by our security operations center (SOC). Once the attacker acquired credentials to a Google Cloud service account, they attempted to create a new service account key to maintain access using the Google SDK.

While the scope of the incident was small since the Google Cloud methods failed (score one for the good guys!), we still learned a lot. In this post, we’ll walk through how we detected it, our investigative process, and some key takeaways that can help secure your Google Cloud environment.

Initial lead and detection methodology

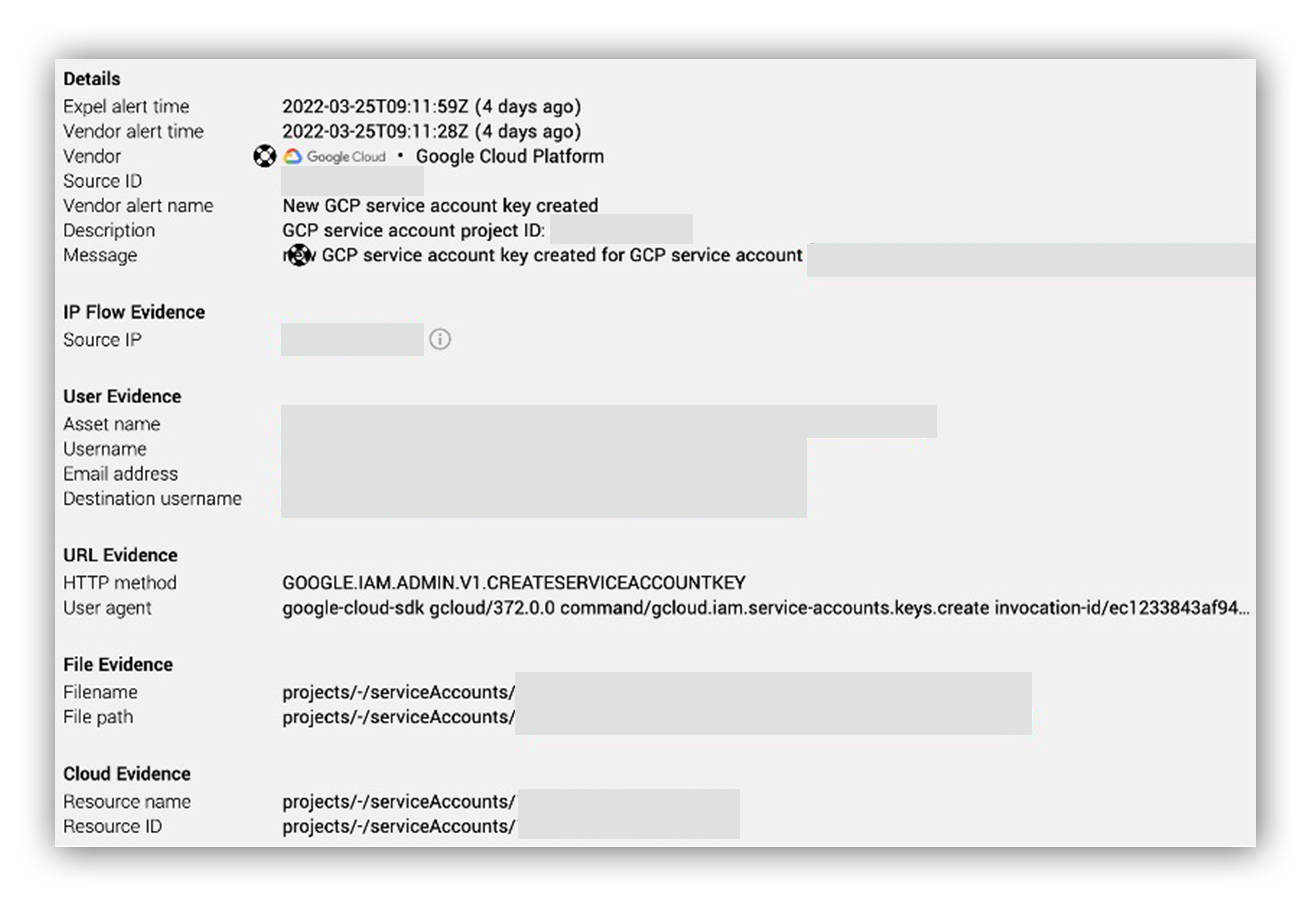

Our initial lead was an alert for an API call to the GCP method google.iam.admin.v1.createserviceaccountkey via SDK from an atypical source IP address.

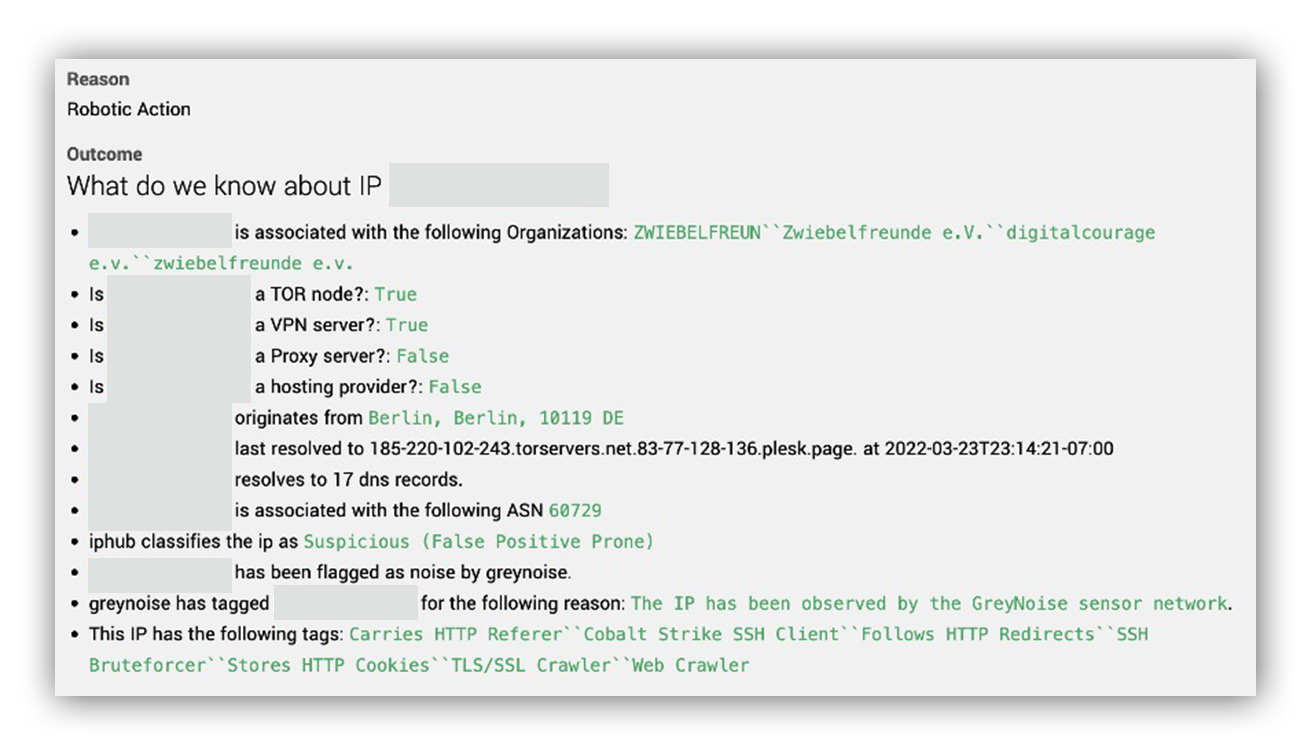

When we surfaced the alert to Workbench™, our friendly bot, Ruxie™, enriched the source IP address with geo-location and reputation information. Turned out, the source IP address was likely a TOR exit node with a history of scanning and brute-forcing. Simply put, an API call to the Google Cloud method google.iam.admin.v1.createserviceaccountkey via SDK from this IP address is unusual.

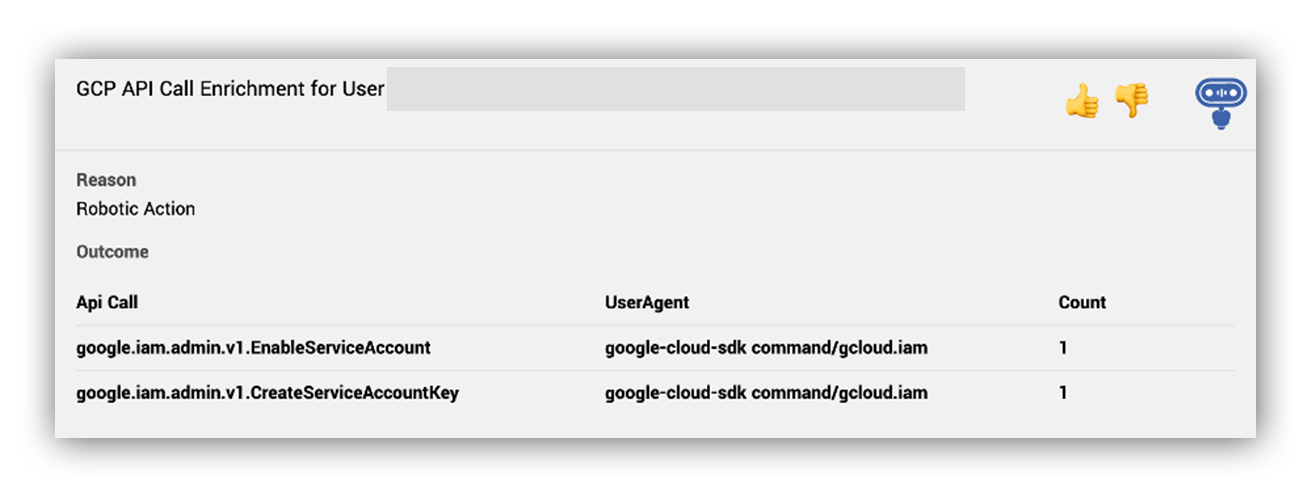

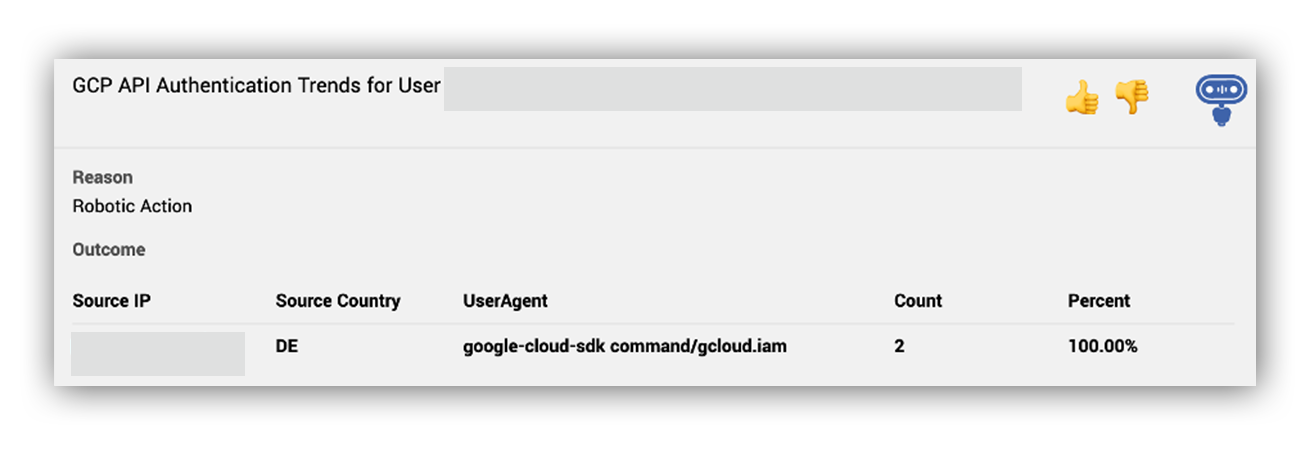

Ruxie also provided our SOC analysts with historical context on what other activities this service account performed in Google Cloud within a 7 day timeframe (shown below). This information enabled our SOC analysts to further confirm that it’s pretty unusual that a service account only performed the Google Cloud methods google.iam.admin.v1.CreateServiceAccountKey and google.iam.admin.v1.EnableServiceAccount using the Google Cloud SDK from a TOR exit node.

Based on this data from Ruxie, we believed these service account credentials were likely compromised—it was time to promote this alert to an incident. We notified the customer, escalated the activity to our SOC emergency on-call team, and began our response.

Investigation and response for a Google Cloud (formerly GCP) incident

Once we knew we had an incident on our hands, the first step was to provide the remediation action to disable the service account and reset credentials to our customer to stop the immediate threat. During this process, we answer our investigative questions:

- How was the Google Cloud service account key compromised?

- What other Google Cloud methods were called by this Google Cloud service account, or its key, before and after the alerted activity?

- What other accounts or activities did we see from the TOR IP address in our customer’s environment?

To answer these questions, we ran searches across the cloud and domain environment and pulled a timeline of GCP incident audit logs.

Timeline analysis of the Google Cloud audit logs showed that the attacker was unable to successfully create a new service account key, or enable the service account, because the compromised service account didn’t have the required Identity and Access Management (IAM) permissions.

After the failed Google Cloud methods, we didn’t observe any more activity from the attacker.

Throughout our investigation, we didn’t observe any evidence to explain how the credentials were initially compromised. This led us to believe that the credentials were likely exposed publicly. The customer ended up confirming that they were committed to a public Github repo which allowed us to implement better resilience actions for all of our customers moving forward.

Lessons and takeaways

Based on our experience, here are some tips and lessons learned from this incident to help you secure your environment from another GCP incident:

- Ensure the principle of least privilege. This hinders attackers from leveraging compromised credentials to further perform post-exploitation in the cloud. For example, the attacker in this incident was unable to perform the GCP methods to create a new service account key because we followed this principle.

- Regenerate your keys periodically. It’s good security hygiene to rotate keys and in the event that older credentials get compromised by an attacker.

- Avoid putting any credentials in code, the source tree, or repositories. This helps prevent credentials from being accidentally exposed. Github has a secret scanning service that identifies security keys that were committed, and Google has a security key detection feature that you can enable.

Want to learn more about how Expel can help keep your GCP environment secure? Reach out anytime.