Bug-free code? A network that never goes down? A system with 100 percent uptime?

That’s the stuff developer’s dreams are made of.

But the reality is that with so much information flowing around in modern systems, you need to know if important data ever goes missing. Customers are counting on you to process every last drop of data to provide them with awesome service.

Why you need to audit your data

That might sound overwhelming, but I’ve got good news for you … it’s totally possible to confirm all the right data made it from point A to point B in your system if you build an auditable data ingestion process. At Expel, our data ingestion process involves retrieving alerts from security devices, normalizing and enriching, filtering them through a rules engine and eventually landing those alerts in persistent storage.

An auditable process is one that can be repeated over and over with the same parameters and yield comparable results. To make our data ingestion process auditable, we ingest vendor alerts through polling instead of streaming whenever possible.

Like all developers know, software has bugs. Auditing validates that the raw data ingested did not get dropped because of a bug or improperly handled error during its journey through the backend of your system.

The first step in creating an auditable process you can use again and again is to poll for data from the sources you monitor.

Polling is a method of using specific parameters such as time to request updated data. It requires scheduling and offset tracking (TL;DR a lot more code than syslog-type streaming), but it doesn’t depend on network reliability and it allows you to retrieve historical data. That’s critical for someone working in security operations. By polling for alerts, you’ll be able to reproduce the API calls that were created to ingest alerts at any point in time — meaning you can make sure you received those alerts and that your system ingested them correctly.

By polling for data, you enable auditing. Basically, you can check your work and make sure that data is now where it’s supposed to be in your system.

How to up your (data auditing) game

Ready to crush data ingestion and build an awesome data auditing process?

Here are five pro tips for rocking your data auditing process to make sure you’re never missing anything that’s mission critical.

Tip 1: Identify comparable information in your data chunks

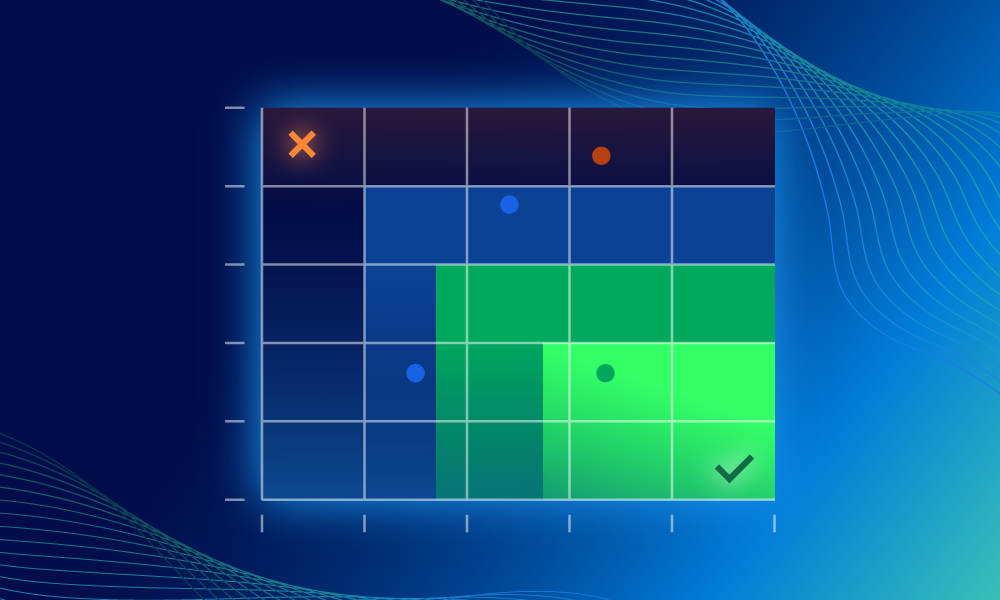

The goal of auditing is to figure out if a piece of data made it all the way to where it needs to be in your system. At Expel, our engineering team uses auditing to make sure that a security alert made it through our enrichment process, our rules engine, and all the other services it’s supposed to filter through without falling out of the pipeline.

To verify that an alert wasn’t dropped along the way, we need to find an element of the data that is comparable, unique and shared between the initial raw alert as it enters the system and the final enriched alert as it comes to rest in persistent storage. Most security devices label their alerts with some kind of unique identifier like “abc123” so that extra alert details can be retrieved through an API request. Here’s what the unique identifier in the raw JSON for an Endgame alert looks like:

{

"data": {

"status": "success",

"user_display_name": null,

"family": "detection",

"id": "423b67b0-461e-4997-949a-ceeeb69051ab",

"viewed": true,

"viewed_by": {

"98101a9d-a98f-405e-91e3-ef7a53e8bcb1": 1,

"c1848d5b-a77a-4943-a4d1-14d6cd8ea7d1": 1

},

"archived": null,

"user_id": null,

"severity": "high",

"indexed_at": "2019-01-01T13:42:15.583Z",

"system_msg": null,

"semantic_version": "3.50.4",

"type": "fileClassificationEventResponse",

"related_collections": {},

"local_msg": "Success",

"system_code": null,

"local_code": 0,

"data": {

...

},

"doc_type": "alert",

"endpoint": {

...

},

"task_id": "d67c5d9b-592b-4337-86d9-d85f2b1cb56b",

"created_at": "2019-01-01T21:50:44Z",

"machine_id": "3b656860-2d5a-45c2-9241-d8799cd123d7"

},

"metadata": {

"timestamp": "2019-01-30T04:22:12.345999"

}

}

Use vendor-crafted unique IDs to your advantage and record them as data enters your system. That way you can compare data that you have in persistent storage to the raw data you are freshly polling in an audit by looking at unique IDs.

Tip 2: Identify the potential data entrance and resting points before you start your audit

Think about the potential points at which data might enter your system — like API calls — and jot those down. These should also be points at which you can access the data chunks that you want to compare. These are the bits you’ll reproduce in an audit to compare to the data that has already been processed. Ask yourself, “At what point do I become responsible for the data being ingested?”

Now you have a starting point. To come up with an ending point, ask this question: “At what point does the data stop mutating, or come to a resting place?” It’s probably when the data enters persistent storage — like Postgres or Elasticsearch.

Now you know where you can easily access the final state of your alerts.

Tip 3: Reuse existing infrastructure

Auditing uses lots of operations that are identical to what happens during data ingestion. Now that you know your start and end points, ask this question: “What code already exists to retrieve my data that does not alter or move it?”

You can probably reuse all the queries and tasking operations you already have set up to talk to the various external APIs — the ones that just get the data in the door. If your code to audit runs in a separate service than your tasking operations, make sure the services can talk to each other.

For example, we use gRPC to communicate between internal services. So you could put an RPC method in front of your tasking operations that lets you inject a request for audit data alongside the normal polling tasks. Here’s an example protobuf that defines an RPC to reuse tasking operations:

syntax = "proto3";

service TaskingOperations {

rpc CreateTask(CreateTaskRequest) return (CreateTaskResponse);

}

message CreateTaskRequest {

...

}

message CreateTaskResponse {

...

}

The service that directly executes the tasking operations would implement and serve CreateTask while the service running audits would call CreateTask. For more information on setting up a gRPC client/server system check this out.

Reusing existing infrastructure minimizes the need for developing and maintaining new code, and ensures that your auditing process begins with the same base set of data as routine polling.

Tip 4: Lighten the load on your data sources

One issue you might encounter with APIs is that they aren’t all equipped to handle large queries. So you have to be careful about how often you ask for data and the quantity of data you want to query. Otherwise, requests for data being used to audit might interfere with your primary task of ingesting new data.

We solve this problem by staggering our requests — or launching an audit when the full-time window of data we want to audit already exists in our system. Say we want to audit two hours’ worth of data from 10:00 am – 12:00 pm for device X and it’s 10:00 am. We wait until our polling offset for device X is 12:00 pm and then launch the audit.

This solves two problems. First, no unnecessary extra operations are happening from 10:00 am to 12:00 pm that may interfere with the device’s ability to return data to our primary alert polling operation. Second, if newly discovered evidence changes the outlook on previously dismissed alerts, some security devices can escalate these alerts “in the past” — meaning their delivery is delayed and that the data doesn’t come to us in real time. Even though we account for these situations in our primary alert polling operations, auditing later lets us check that we’ve successfully captured those alerts that are delayed in getting to us.

Tip 5: Use distributed tracing

Distributed tracing (we use Stackdriver Trace for this) is essential to follow the life cycle of a piece of data. This lets you know where that data is at all times. If your auditing process tells you a piece of data might be missing, you have to find a way to confirm whether that’s true. Simply seeing that you polled some data and it didn’t make its way into persistent storage doesn’t tell you when, where or how it was dropped. Knowing how a piece of data was dropped paves the way to fixing the root cause of the bug.

Use distributed tracing to pinpoint the last operation that touched and processed the data, and the actions related to it. Then you have a much better understanding of where things (literally) went sideways.

Next steps

As you get into a regular auditing routine, the integrity and reliability of your data increases, and that’s good news for your org and customers.

Do you already audit your data? Are there more auditing tips and tricks you’ve found that we should add to this list? Drop us a note or tweet to us at @expel_io to share your pointers!